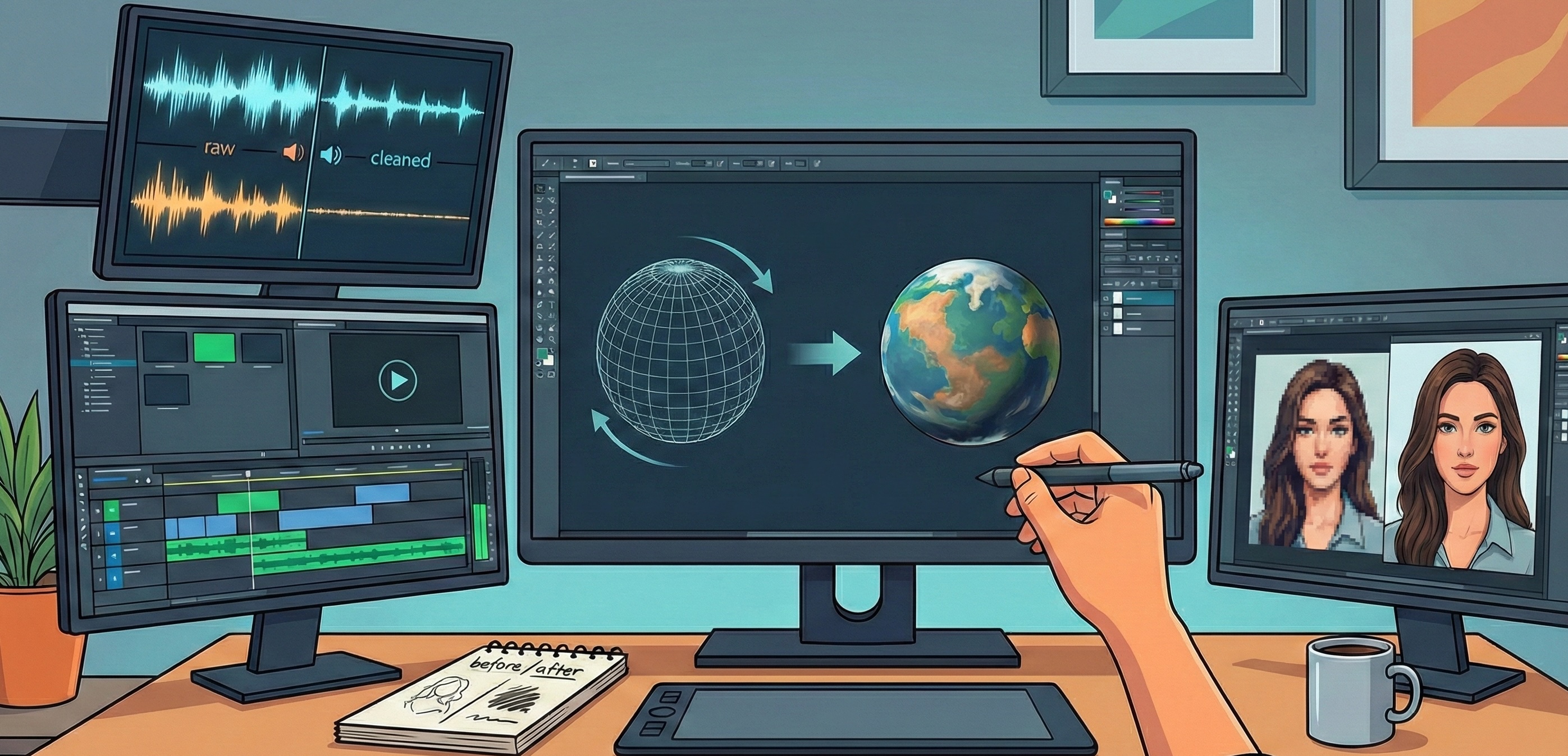

Every week brings another AI tool promising to revolutionise creative workflows. Image generators that produce photorealistic output in seconds. Video tools that turn text prompts into motion. 3D generators that create assets from a single reference image.

The demos are genuinely impressive. But for creative teams evaluating whether these tools belong in actual production workflows, the question isn’t “what can it do?” It’s “can I rely on it?”

Here’s a practical survey of where the AI creative tools landscape sits right now, focused on what matters for professional work rather than what makes for impressive social media posts.

Image Generation: The Most Mature Category

Image generation has had the longest runway, and it shows. This is where AI creative tools are closest to genuinely production-ready.

MidJourney remains the default for stylised, artistic output. The aesthetic quality is consistently high, and the community has developed sophisticated prompting techniques. The Discord-based interface is clunky for professional workflows, but the output quality often justifies the friction. Best for: concept exploration, mood boards, stylised illustrations where the “AI look” is acceptable or even desirable.

Adobe Firefly matters for a different reason: it’s trained on licensed content, which addresses the copyright uncertainty hanging over other generators. The output is often less distinctive than MidJourney, but for commercial work where legal clarity matters, that trade-off can be worthwhile. Integration with Creative Cloud means it fits existing workflows.

Leonardo.ai offers more control over output, with fine-tuning capabilities and consistent character generation that other tools struggle with. For projects needing multiple images with visual continuity, this control matters.

Stable Diffusion is the open-source option, meaning it can run locally with no usage limits and complete privacy. The trade-off is technical complexity. For teams with the capability to manage it, the flexibility is significant.

The production reality: Image generation works well for ideation, concepting, and assets where some variation is acceptable. It struggles with precise brand requirements, exact colour matching, and anything requiring pixel-perfect consistency. Most professional workflows use AI generation as a starting point, with significant human refinement before final delivery.

Video Generation: Impressive but Immature

Video is where the gap between demos and production is widest. The progress over the past year has been remarkable, but reliability remains a challenge.

Runway (Gen-3 Alpha) produces the most consistently usable output for short clips. Motion quality has improved significantly, and the image-to-video capability is genuinely useful for bringing static concepts to life. The limitation: duration. Useful clips are typically under ten seconds, and maintaining coherence beyond that is unreliable.

Veo (Google) and Sora (OpenAI) have shown impressive demos, but access remains limited and the gap between curated demos and average output is substantial. Worth watching, but not yet tools you can build workflows around.

Pika and Kling are pushing capabilities forward, particularly for specific use cases like character animation. The landscape is shifting quickly enough that any specific recommendation would be outdated within months.

The production reality: Video generation is useful for quick concepts, social content where imperfection is acceptable, and rough animatics to communicate ideas. It’s not ready for hero content, broadcast work, or anything requiring precise creative control. The “uncanny valley” problem, where output looks almost right but something is subtly wrong, remains significant.

3D Generation: Early Days

3D generation is the newest frontier, and expectations should be calibrated accordingly.

Tripo3D and Meshy can generate 3D models from text or images. The output is genuinely useful for rough prototyping and concepting. Getting from AI-generated mesh to production-ready asset still requires significant cleanup: topology issues, UV mapping problems, and geometry that doesn’t behave well in animation pipelines.

Luma AI approaches 3D from capture rather than generation, turning video into 3D scenes. For specific use cases like product visualisation or environment scanning, the results can be impressive.

The production reality: These tools accelerate the early stages of 3D work but don’t replace modelling expertise. Think of them as sophisticated reference generators rather than asset pipelines. A skilled 3D artist using these tools is more productive. Someone without 3D fundamentals will produce assets that cause problems downstream.

Audio and Voice: Quietly Useful

Audio generation gets less attention but may be closer to production-ready than video or 3D.

ElevenLabs produces synthetic voice that’s often indistinguishable from human recording. For scratch tracks, prototypes, internal presentations, and some final applications, the quality is there. The ethical considerations around voice cloning are real and worth taking seriously.

Suno and Udio generate music that’s surprisingly competent for background and mood-setting purposes. Not replacing composers for distinctive work, but useful for placeholder audio and projects where music is functional rather than featured.

The production reality: Audio tools are production-ready for more use cases than most people realise. The quality threshold for acceptable audio is often lower than for visuals, and these tools clear it for many applications.

The Consistent Pattern

Across all these categories, the same pattern emerges:

Ideation and exploration: AI tools excel here. Generating options quickly, exploring directions, communicating concepts before committing to production.

Rough and placeholder content: Useful for internal work, client presentations, testing, and anywhere that “good enough” is genuinely good enough.

Final delivery: Still requires human expertise, refinement, and quality control. The gap between AI output and production-ready assets is real.

Consistency and control: This is where AI tools struggle most. Generating one good image is straightforward. Generating twenty images that feel like they belong to the same campaign is hard. Matching exact brand colours, maintaining character consistency across scenes, hitting precise creative specifications; these remain challenges.

What This Means for Creative Teams

The tools are genuinely useful. They’re also genuinely limited. The risk isn’t that AI will replace creative expertise. It’s that teams will over-rely on tools that produce output that looks finished but isn’t.

The creative professionals getting the most value from these tools are those who understand their limitations well enough to deploy them appropriately. They use AI generation for the right tasks, apply human judgment to evaluate output, and know when to stop iterating with prompts and start working with traditional tools.

That judgment comes from creative expertise. The tools don’t teach you what good looks like. They just make it faster to generate options once you already know.

Exploring AI tools for your creative workflow?

Contact our team to discuss what’s production-ready and what’s still hype.

Simon Paul is a Business Solutions & Technology Specialist at Code Brewery with 25+ years taking creative concepts into production. He’s found that the most useful tools are the ones that make talented people more productive, not the ones that promise to replace them. Reach out to Simon to discuss how emerging creative technology might fit your next campaign.